This article is an excerpt from the Shortform book guide to "Life 3.0" by Max Tegmark. Shortform has the world's best summaries and analyses of books you should be reading.

Like this article? Sign up for a free trial here.

What’s Life 3.0 by Max Tegmark about? Will artificial intelligence become smarter than humans?

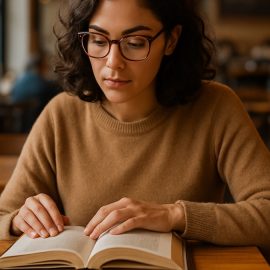

Life on Earth has drastically transformed since it first began. As Max Tegmark says in his book Life 3.0, humans have built a civilization so complex that it would be utterly incomprehensible to the lifeforms that came before us.

Read below for a brief Life 3.0 book overview to understand why Tegmark thinks artificial intelligence is the next revolutionary change.

Life 3.0 by Max Tegmark

Judging by recent technological strides, author Max Tegmark believes that an equally revolutionary change is underway. If an amoeba is “Life 1.0,” and humans are “Life 2.0,” Tegmark contends that an artificial superintelligence could become “Life 3.0.” A power like this could either save or destroy humanity, and Tegmark argues that it’s our responsibility to do everything we can to ensure a positive outcome—before it’s too late.

Tegmark is a physics professor at MIT and president of the Future of Life Institute, a nonprofit dedicated to using technology to avert threats to humanity on a global scale. He wrote the Life 3.0 book in 2017 to popularize urgent AI-related issues and increase the chance that humanity will successfully use AI to create a better future.

The Situation: Artificial Superintelligence Might Appear Soon

Tegmark defines intelligence as the capacity to successfully achieve complex goals. Thus, an “artificial superintelligence” is a computer sophisticated enough to understand and accomplish goals far more capably than today’s humans. For example, a computer that could manage an entire factory at once—designing, manufacturing, and shipping out new products all on its own—would be an artificial superintelligence. By definition, a superintelligent computer would have the power to do things that humans currently can’t; thus, it’s likely that its invention would drastically change the world.

Tegmark asserts that if we ever invent artificial superintelligence, it will probably occur after we’ve already created “artificial general intelligence” (AGI). This term refers to an AI that can accomplish any task with at least human-level proficiency—including the task of designing more advanced AI.

Experts disagree regarding how likely it is that computers will reach human-level general intelligence. Some dismiss it as an impossibility, while others only disagree on when it will probably occur. According to a survey Tegmark conducted at a 2015 conference, the average AI expert is 50% certain that we’ll develop AGI by 2055.

AGI is important because, in theory, a computer that can redesign itself to be more intelligent can use that new intelligence to redesign itself more quickly. AI experts call this hypothetical accelerating cycle of self-improvement an intelligence explosion. If an intelligence explosion were to happen, the most sophisticated computer on the planet could advance from mildly useful AGI to world-transforming superintelligence in a remarkably short time—perhaps just a few days or hours.

Evidence That Artificial Superintelligence Is Possible

The idea that we could build a computer that’s smarter than us may seem far-fetched, but some evidence indicates that such technology is on its way, according to Tegmark.

First, the artificial intelligence we’ve created so far is functioning more and more like AGI. Researchers have developed a new way to design sophisticated AI called deep reinforcement learning. Essentially, they’ve created computers that can repeatedly modify themselves in an effort to accomplish a certain goal. This means that machines can now use the same process to learn different skills—a necessary component of AGI. Currently, there are many human skills that developers can’t teach to computers using deep reinforcement learning, but that list is becoming shorter.

Second, Tegmark asserts that given everything we know about the universe, there’s no obvious reason to believe that artificial superintelligence is impossible. Although it may seem like our brains possess unique creative powers, they store and process information in much the same way that computers do. The information in our heads is a biological pattern rather than a digital one, but the information itself is the same no matter what material it’s encoded with. In theory, computers can do everything our brains can do.

Tegmark concedes that AGI and artificial superintelligence might still be a pipe dream, impossible to create for some reason we don’t yet see. However, he contends that if there’s even a small chance that an artificial superintelligence will exist in the near future, it’s our responsibility to do anything in our power to ensure that it has a positive impact on humanity. This is because artificial superintelligence has the power to completely transform the world—or end it—as we’ll see next.

The Possibilities: How Could Artificial Superintelligence Change the World?

So far, we’ve explained that an artificial superintelligence is a technology that would greatly surpass human capabilities, and we’ve argued why it’s possible that artificial superintelligence might someday exist. Now, let’s discuss what this means for us humans.

We’ll explain why a superintelligence’s “goal” is the primary factor that will determine how it will change the world. Then, we’ll explore how much power such a superintelligence would have. Finally, we’ll discuss what might happen to humanity after a powerful superintelligence enters the world, investigating three optimistic scenarios followed by three pessimistic ones.

The Outcome Depends on the Superintelligence’s Goal

Tegmark asserts that if an artificial superintelligence comes into being, the fate of the human race depends on what that superintelligence sets as its goal. For instance, if a superintelligence pursues the goal of maximizing human happiness, it could create a utopia for us. If, on the other hand, it sets the goal of maximizing its intelligence, it could kill humanity in its efforts to convert all matter in the universe into computer processors.

It may sound like science fiction to say that an advanced computer program would “have a goal,” but this is less fantastical than it seems. An intelligent entity doesn’t need to have feelings or consciousness to have a goal; for instance, we could say an escalator has the “goal” of lifting people from one floor to another. In a sense, all machines have goals.

One major problem is that the creators of an artificial superintelligence wouldn’t necessarily have continuous control over its goal and actions, argues Tegmark. An artificial superintelligence, by definition, would be able to solve its goal more capably than humans can solve theirs. This means that if a human team’s goal was to halt or change an artificial superintelligence’s current goal, the AI could outmaneuver them and become uncontrollable.

For example: Imagine you program an AI to improve its design and make itself more intelligent. Once it reaches a certain level of intelligence, it could predict that you would shut it off to avoid losing control over it as it grows. The AI would realize that this would prevent it from accomplishing its goal of further improving itself, so it would do whatever it could to avoid being shut off—for instance, by pretending to be less intelligent than it really is. This AI wouldn’t be malfunctioning or “turning evil” by escaping your control; on the contrary, it would be pursuing the goal you gave it to the best of its ability.

The Possible Extent of AI Power

Why would an artificial superintelligence’s goal have such a dramatic impact on humanity? An artificial superintelligence would use all the power at its disposal to accomplish its goal. This is dangerous because such a superintelligence could theoretically gain an unimaginable amount of power—enough to completely transform our society with a negligible amount of effort.

According to Tegmark, although an artificial superintelligence is a digital program, it could easily exert power in the real world. For instance, it could make money selling digital goods such as software applications, then use those funds to bribe humans into unknowingly working for it (perhaps posing as a human hiring manager on digital job listing platforms). An AI controlling a Fortune 500-sized human task force could do almost anything—including creating robots that the AI could control directly.

Tegmark asserts that, in theory, an artificial superintelligence could eventually attain godlike power over the universe. By using its intelligence to create increasingly advanced technology, an AI could eventually create machines able to rearrange the fundamental particles of matter—turning anything into anything else—as well as generate nearly unlimited energy to power those machines.

Optimistic Possibilities for Humanity

What might happen to humanity if an artificial superintelligence wields nearly unlimited power over the world in service of a single goal? Tegmark describes a number of possible outcomes, each of which results in a wildly different way of life for humans—or the end of human life.

Let’s begin by discussing three scenarios in which the AI’s goal, whatever it may be, allows humans to live relatively happy lives.

Possibility #1: Friendly AI Takes Over

First, Tegmark imagines that an artificial superintelligence could overthrow existing human power structures and use its vast intelligence to create the best possible world for humanity. No one could challenge the AI’s ultimate authority, but few people would want to, since they have everything they need to live a fulfilling life.

Tegmark clarifies that this isn’t a world designed to maximize human pleasure, which would mean continuously injecting every human with some kind of pleasure-inducing chemical. Rather, this is a world in which humans are free to continuously choose the kind of life they want to live from a diverse set of options. For instance, one human could choose to live in a non-stop party, while another could decide to live in a Buddhist monastery where rowdy, impious behavior wouldn’t be allowed. No matter who you are or what you want, there would be a “paradise” available for you to live in, and you could move to a new one at any time.

Possibility #2: Friendly AI Stays Hidden

Tegmark supposes another positive scenario that’s a bit different: Instead of completely taking over the world, an artificial superintelligence does everything within its power to improve human lives while keeping its existence a secret.

This could happen if the artificial superintelligence—or someone influencing its goals—concluded that to be as happy and fulfilled as possible, humans need to feel in control of their destiny. Arguably, if you knew that an all-powerful computer could give you anything you wanted (as in the previous optimistic scenario), you might still feel like your life is meaningless and be less satisfied because you don’t have control over your life. In this case, the best thing a godlike AI could do for you is help without your knowledge.

Possibility #3: AI Protects Humanity From AI

Third, Tegmark imagines a scenario in which humans create an artificial superintelligence with the sole purpose of preventing other superintelligences from coming into existence. This allows humans to continue developing more advanced technology without worrying about the potential dangers of another AI.

According to Tegmark, the advanced technology humans could develop in a world free from superintelligence would eventually allow us to create a bountiful classless society. Robots are able to build anything humans might want, making scarcity a thing of the past. Since robots are constantly generating surplus wealth, the government can give everyone a universal basic income (UBI) that’s high enough to purchase anything they could possibly need. People are free to work for more money, but finding a productive job is near-impossible since everything people might buy is already given to them for free.

Pessimistic Possibilities for Humanity

Next, let’s take a look at some of the existential dangers that an artificial superintelligence poses. Here are three scenarios in which the AI’s goal ruins humans’ chances to live a satisfying life.

Possibility #1: AI Kills All Humans

Tegmark contends that an artificial superintelligence may end up killing all humans in service of some other goal. If it doesn’t value human life, it could feasibly end humanity just for simplicity’s sake—to reduce the chance that we’ll do something to interfere with its mission.

If an artificial superintelligence decided to drive us extinct, Tegmark predicts that it would do so by some means we currently aren’t aware of (or can’t understand). Just as humans could easily choose to hunt an animal to extinction with weapons the animal wouldn’t be able to understand, an artificial intelligence that’s proportionally smarter than us could do the same.

Possibility #2: AI Cages Humanity

Another possibility is that an artificial intelligence chooses to keep humans alive, but it doesn’t put in the effort to create a utopia for us. Tegmark argues that an all-powerful superintelligence might decide to keep us alive out of casual curiosity. In this case, an indifferent superintelligence would likely create a relatively unfulfilling cage in which we’re kept alive but feel trapped.

Possibility #3: Humans Abuse AI

Finally, Tegmark imagines a future in which humans gain total control over an artificial superintelligence and use it for selfish ends. Theoretically, someone could use such a machine to become a dictator and oppress or abuse all of humanity.

What Should We Do Now?

We’ve covered a range of possible outcomes of artificial superintelligence, from salvation to disaster. However, all these scenarios are merely theoretical—let’s now discuss some of the obstacles we can address today to help create a better future.

We’ll first briefly disregard the idea of superintelligence and discuss some of the less speculative AI-related issues society needs to overcome in the near future. Then, we’ll conclude with some final thoughts on what we can do to improve the odds that the creation of superintelligence will have a positive outcome.

Short-Term Concerns

The rise of an artificial superintelligence isn’t the only thing we have to worry about. According to Tegmark, it’s likely that rapid AI advancements will create numerous challenges that we as a society need to manage. Let’s discuss:

- Concern #1: Economic inequality

- Concern #2: Outdated laws

- Concern #3: AI-enhanced weaponry

Concern #1: Economic Inequality

First, Tegmark argues that AI threatens to increase economic inequality. Generally, as researchers develop the technology to automate more types of labor, companies gain the ability to serve their customers while hiring fewer employees. The owners of these companies can then keep more profits for themselves while the working class suffers from fewer job opportunities and less demand for their skills. For example, in the past, the invention of the photocopier allowed companies to avoid paying typists to duplicate documents manually, saving the company owners money at the typists’ expense.

As AI becomes more intelligent and able to automate more kinds of human labor at lower cost, this asymmetrical distribution of wealth could increase.

Concern #2: Outdated Laws

Second, Tegmark contends that our legal system could become outdated and counterproductive in the face of sudden technological shifts. For example, imagine a company releases thousands of AI-assisted self-driving cars that save thousands of lives by being (on average) safer drivers than humans. However, these self-driving cars still get into some fatal accidents that wouldn’t have occurred if the passengers were driving themselves. Who, if anyone, should be held liable for these fatalities? Our legal system needs to be ready to adapt to these kinds of situations to ensure just outcomes while technology evolves.

Concern #3: AI-Enhanced Weaponry

Third, AI advancements could drastically increase the killing potential of automated weapons systems, argues Tegmark. AI-directed drones would have the ability to identify and attack specific people—or groups of people—without human guidance. This could allow governments, terrorist organizations, or lone actors to commit assassinations, mass killings, or even ethnic cleansing at low cost and minimal effort. If one military power develops AI-enhanced weaponry, other powers will likely do the same, creating a new technological arms race that could endanger countless people around the world.

Long-Term Concerns

How should we address the long-term concerns related to AI, including potential superintelligence creation? Because there’s little we know for sure about the future of AI, Tegmark contends that one of humanity’s top priorities should be AI research. The stakes are high, so we should try our best to discover ways to control or positively influence an artificial superintelligence.

———End of Preview———

Like what you just read? Read the rest of the world's best book summary and analysis of Max Tegmark's "Life 3.0" at Shortform.

Here's what you'll find in our full Life 3.0 summary:

- That an artificial intelligence evolution will make us the simple lifeforms

- The evidence that artificial superintelligence might soon exist

- Whether or not we should be alarmed about the emergence of AI